- Blog

- Juventud en extasis descargar gratis libro

- Google chrome latest version for windows 7 64 bit

- How to find serial number for kindle fire hd

- Movie magic screenwriter 6 rapidshare

- Android sdk download windows 10 64 bit

- Install gfortran in linux cluster

- Sims 4 recording software for free

- Download adaucogit salt v2 1 crack

- Free layout programs

- Norton antivirus free trial for 90 days for windows 7

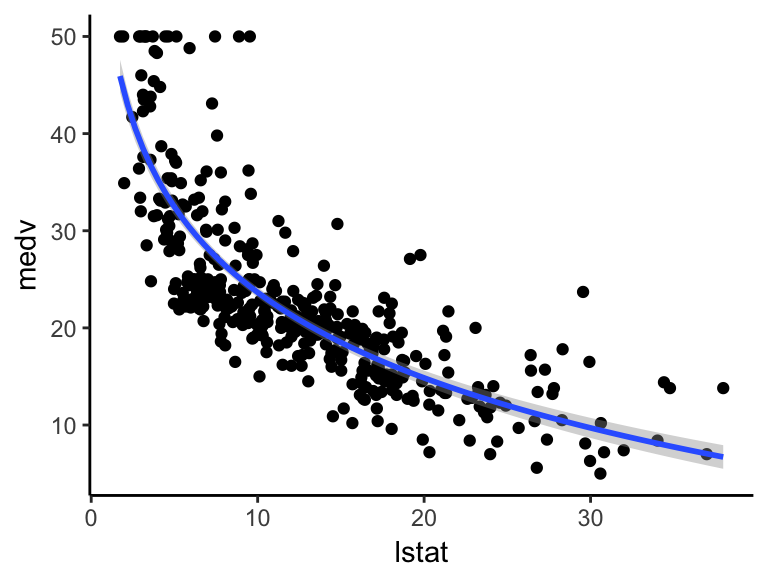

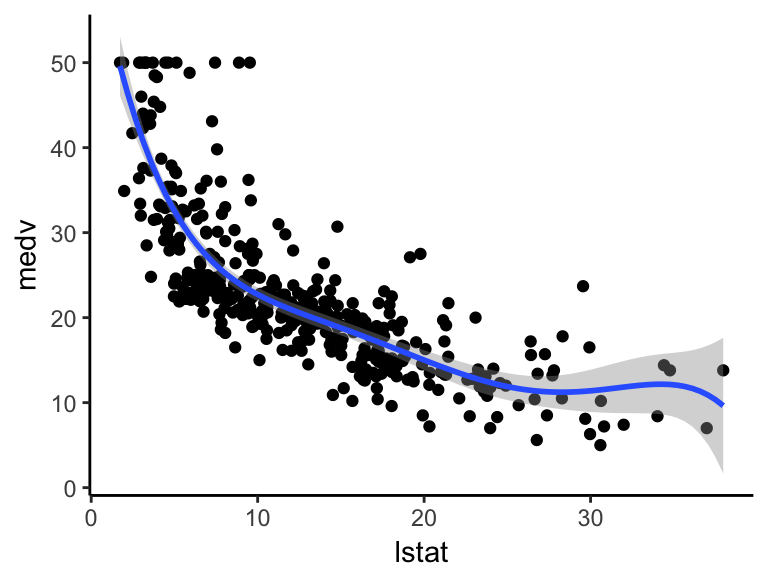

- Non linear regression excel

- The saying for the lord of the rings ring

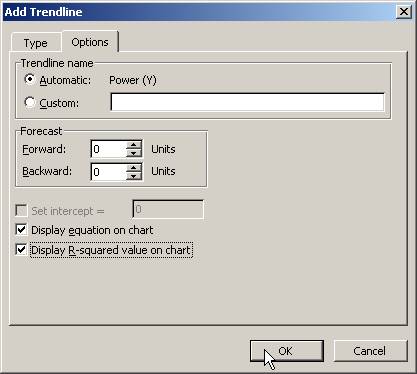

#NON LINEAR REGRESSION EXCEL HOW TO#

How to perform nonlinear regression in excel begins with initializing decision variables to 1000, 1000, and 0.5 in cells C3, C4, and C5 respectively. You can use the Solver to determine the optimal values of A0, A1, and B1 decision variables that minimizes the objective function. A polynomial of degree n is a function of the form,į( x) = a Before running Solver Her main purpose is to build a model that will predict sales by the discount level. A sales manager has collected the following data points on the discount% vs. Running promotions with discounts is one of the most popular and effective ways to drive sales. If the scatter is Gaussian or a bell shaped, the curve determined by minimizing the sum-of-squares is most likely to be correct. However, a function that minimizes the sum of the squares of the distances prefers to be 10 units away from two points where the sum-of-squares is 100, rather than 5 units away from one point and 15 units away from another, where the sum-of-squares is 250.

You are most likely to find two deviations, say 10-units each from the curve than finding one small deviation of 5 units and one large deviation of 15 units.Ī function that minimized the sum of the absolute value of the distances would have no preference over a curve that was 10 units away from two points and one that was 5 units away from one point and 15 units from another. If the random scatter plot follows a Gaussian distribution, it is very likely to have two moderate deviations than to have a very small and a very large deviation. You may pause for a second and ask, Why minimize the sum of squares of the distances? Would minimizing the sum of the linear distances work? Simple Explanation If you go by this assumption, the next step is to tweak the model’s parameters to find the curve that minimizes the sum of squares of the distances of the points from the curve. Although, other distributions such as Cauchy, Student’s t, and logistic distribution are bell-shaped as well.Ī common assumption is that the data points are randomly scattered along a curve or a line with the scatter following a Gaussian distribution. The normal distribution is sometimes called the bell curve. There is no need to learn the deep math behind it.Ī random variable with a Gaussian distribution is said to be normally distributed and is called a normal deviate. If this is your goal, you can guess by looking at the data plot and curve. To simply fit a smooth curve so you can interpolate values from the curve, or perhaps to draw a graph with a smooth curve.To fit a model to your data in order to obtain best-fit values of the parameters, or to compare the fits of alternative models.Nonlinear regression can serve two distinct goals

Here are few simple steps on how to perform nonlinear regression in Excel. The Excel Solver can be used to find the equation of the linear or nonlinear curve which closely fits a set of data points. Its curve-fitting capabilities makes it an excellent tool for performing nonlinear regression. (The initial guesses for k\ and k2 were 0.30 and 0.80, respectively and the array of starting values are 70%, 80%, 90%, 100%, 110%, 120% and 130% of the respective initial estimates.Excel Solver is one of the simple and easy curve-fitting tool around. Starting with our initial guesses for k\ and k2, we could create a two-dimensional array of starting values that bracket our guesses, as in Figure 14-2. We could do this in a true "trial-and-error" fashion, attempting to guess at a better set of k\ and k2 values, then repeating the calculation process to get a new (and hopefully smaller) value for the Residuals- Or we could attempt to be more systematic. Our goal is to minimize this error-square sum. For data such as in Figure 14-1, we could proceed in the following manner: using reasonable guesses for k\ and k2, calculate at each time data point, then calculate the sum of squares of residuals, SSresiiuais = S(ca]c - expt)2.

To perform nonlinear regression, we must essentially use trial-and-error to find the set of coefficients that minimize the sum of squares of differences between _ycaic and _yobsd. Unlike for linear regression, there are no analytical expressions to obtain the set of regression coefficients for a fitting function that is nonlinear in its coefficients.